The recent Dorkbot show seemed to go off nicely - it was great to be part of such a strong show of local work (some documentation). I showed some prints from Limits to Growth, as well as a more experimental process piece, Watching the Street - a (sub)urban remake of Watching the Sky.

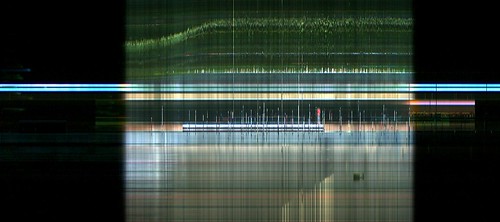

Credit to Nathan McGinness for the suggestion: use the same time-lapse / slit-scan technique to image change in an urban environment. Technically, the setup was fairly straightforward. Instead of a digital stills camera I used a webcam (in portrait orientation), and wrote a simple Processing script to save stills at one-minute intervals, while extracting and compiling one-pixel slices into 24-hour composites. The webcam was installed in a window box on the gallery street front, with a view across the road, under a street tree, to one of Manuka's low-rise shopping arcades (above). I also attached a printer to the installed rig, so that a new composite could be produced and pinned to the wall each day. So here, some of the resulting images, and a bit of commentary.

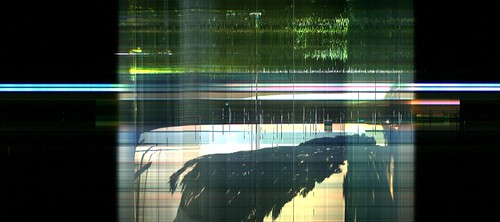

The image-gathering process got off to a rocky start. After a few hours, the webcam came unstuck from the side of the window-box, and lay forlornly on its side for the next 48 hours (here's what that looks like). I gaffed it back in place just before the opening, and restarted the capture in time to catch some gallery-goers loitering around out the front.

These two are the Frday the 7th and Saturday the 8th of November, the first two full day composites. Those striped rectangular chunks around mid-frame are cars, parked in the 30 minute loading zone accross the road. Some stay for a few minutes, a couple for what looks like an hour. Of course on the Saturday, the loading zone doesn't operate, and there's a single car parked in it from mid-morning to mid-afternoon. The single-pixel vertical shards give an indication of passing car and pedestrian traffic.

A quiet, sunny Sunday the 9th; the form hinted at on the 8th, reveals itself as the shadow of the big plane tree, creeping across the footpath. Then the following Friday the 14th. It's all happening; lots of car and pedestrian traffic, changes in sunlight, looks like an afternoon breeze in the foliage as well. The dominant, bluish horizontal stripe in all these images is the neon sign on the shopping centre - which runs all night. The orange rectangle that extends into the evening is the interior light of a shop - which you'll notice switches off at slightly different times each night.

So you'll notice that as in Watching the Sky, I'm persisting in reading these as visualisations of the environment, as well as digital images in themselves. I'm struck by how this simple, indiscriminate process reveals both expected and unexpected patterns, and continues to provoke new questions. This despite, or I would argue because of, its openness to multiple material / temporal systems. In an interesting bit of synchronicity, I was teaching in the UTS Street as Platform masterclass with Dan Hill (more on that soon) while this piece was running. Could a simple visualisation process like this function "informationally", as it were; to help answer real questions about a very specific slice of urban environment, in near-real time? More interesting for me, could it function in that way without prescribing the question in advance - that is, could it support an open-ended process of exploration and interpretation? I'm planning to build an interactive version of this piece, to try out these ideas. In these static visualisations there's a huge amount of data missing: I set the slice point more-or-less arbitrarily, so there are 479 other potentially interesting slices to browse. It would be nice to be able to change the slice point dynamically, as well as navigating through the source images. I notice that Processing 1.0 (yay!) now supports threaded loading of images: could come in handy. Meanwhile, the full set of composite images are up on Flickr.

Thursday, November 27, 2008

Watching the Street

Posted by Mitchell at 9:35 pm

Labels: materiality, photography, processing, projects, urban, visualisation

5 comments:

Love the photos. I've tried experimenting with this technique as a means of representing personal energy use in a (possibly) more meaningful manner than just a plain graph. So I'm creating similar time-sliced photographs but superimposing a graph of my electricity use, as measured by a Current Cost meter.

Are your images using the same slice for each column of pixels in the final image? I've been taking each slice from the corresponding minute (approximately), so the left of the picture is earlier than the right, which means it composites into the original frame.

I'm also finding my webcam captures aren't very good quality. But I also guess the light here in England isn't quite as good as Sydney :)

Hey Tristan, thanks - your graphs are excellent, I especially like the idea of applying this to a domestic interior, a very intimate form of personal data vis. See Miska Knapek's work for more re-composed timelapse slit scans like yours.

Yes for mine the slice is the same - a more or less arbitrary vertical slice. I'm planning to build a browser soon for the whole dataset, that will let you see different slice positions.

Webcam quality is bad, true. For my earlier experiments I used a tethered digital still camera - far superior, but then my camera got nicked. I think you could improve webcam quality by doing frame averaging: grab and store half a dozen frames, then average the pixel values. Because the CCD noise is random it will cancel itself out across multiple frames. The down side is that you also lose some temporal resolution (ie you're shooting at perhaps 3 fps instead of 30)

The Miska Knapek stuff is great, thanks for that, and I'll check out my digital cameras to see if I can control any of them. I was just having a go at creating a composite image averaging all of the captures but can't quite get Processing to do it right. Not sure it will remove noise though - doesn't averaging noise just give noise?

re. digital cameras, seems few can do long term tethered shooting. I was using an old Canon G3 - their software has an intervalometer, and the camera will run on an AC adapter. My new G9 will only run from battery power!

re. frame averaging, it works! Because you're not just averaging noise, but multiple instances of some value +/- noise. The noise cancels out and you're left with the value...

thanks for the mention Mitchell.

by the way, regarding photographing using the G9 - all you need to do is to buy an external AC source - Canon sells them. I'm using a Nikon D80 and a Canon G9, both with (different) external power sources.

The G9 works fine, but it gets a little tired after 24 hours of shooting 10mpix images every 20 seconds. If the frequency is 30 seconds, I should think it works better.

In any event, the G9 works fine with an external AC. (Though one has to buy it separately.... buuuh Canon! ).

m

Post a Comment